Semantic Dissonance: The Silent Failure Mode of Multi-Agent AI Systems

When agents share a channel but not a contract, coherence collapses without warning — and domain-specific languages may be the only reliable remedy.

When agents share a channel but not a contract, coherence collapses without warning — and domain-specific languages may be the only reliable remedy.

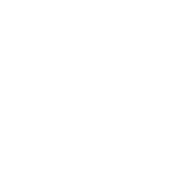

The most dangerous failure in a distributed system is the one that doesn't announce itself. A crashed process raises an alert. A malformed packet triggers a parse error. But an agent that understands your words differently than you intended — that agent completes its task successfully, returns a well-formed response, and silently moves the system further from where you wanted it to go. This is semantic dissonance, and it is endemic to the current generation of multi-agent AI architectures.

As I have worked deeply with Langium-based domain-specific languages as a coordination substrate, I have grown increasingly convinced that what the field calls "alignment problems" are, at the operational layer, fundamentally semantic problems. Agents don't fail only because they are malicious. They can also fail because they lack a shared semantic constitution — a formally enforced agreement about what words mean, what structures are valid, and what operations are permitted in a given context.

This essay develops a working taxonomy of semantic dissonance, applies the analytical frameworks of deontic logic and Hohfeldian jurisprudence to the problem of agent permissions, and argues that grammar-defined DSLs are not merely a convenience but a structural necessity for coherent multi-agent systems.

Semantic dissonance refers to the failure mode in multi-agent systems where agents share a communication channel but operate under divergent or under-specified semantic contracts, producing outputs that are locally coherent but globally incoherent or misaligned with system intent.

The naive view of multi-agent communication treats it as a routing problem: get the right message to the right agent. The slightly more sophisticated view adds a schema layer: ensure messages conform to a shared data contract. Both views are necessary but neither is sufficient, because they address surface structure while leaving deep structure undefined.

Consider two agents trained on different corpora, operating under different system prompts, coordinating on a task described in natural language. They share the token stream. They do not share the interpretation. When Agent A uses the word policy, it may mean a procedural guideline. When Agent B parses the same token, it may activate representations from insurance, or government regulation, or reinforcement learning's policy gradient. The communication succeeds. The coordination fails.

This failure is compounded by the fluency of large language models. Unlike typed systems where a mismatch produces a compile error, LLM agents can generate plausible-sounding responses in any semantic register. They are extraordinarily good at appearing to understand. This makes semantic dissonance orders of magnitude more dangerous in LLM-based systems than in classical distributed architectures, where brittle interfaces at least fail loudly.

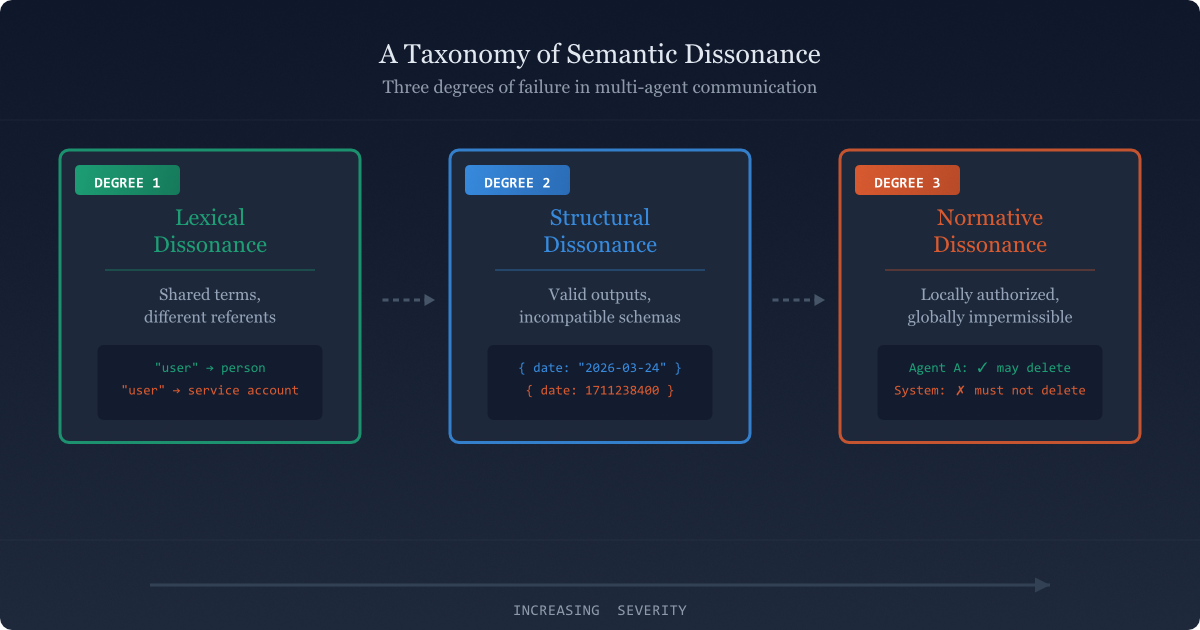

Not all semantic failures are the same. Distinguishing among them matters because each type has different causes, different detection signatures, and different mitigation strategies. I propose three principal degrees.

Lexical dissonance is the most pervasive and the least visible. It arises when the same signifier maps to different signifieds across agents. In classical linguistics this is polysemy; in distributed systems it is a missing shared ontology. The symptoms look like miscommunication but are actually mis-grounding: the agents are not talking about the same things even when they use the same words.

In a Langium-based DSL system, lexical dissonance maps directly to the problem of undefined or ambiguous terminal rules. A grammar that allows freeform strings where it should require enumerated tokens is an invitation to lexical drift. The remedy at the grammar level is explicit cross-reference resolution: every term that carries semantic weight should be a typed reference to a declared entity, not a raw string literal that each agent interprets independently.

// Vulnerable: free string, each agent interprets independently

DataSubject: name=ID category=STRING;

// Resilient: enumerated, ontology-grounded

DataSubject: name=ID category=SubjectCategory;

SubjectCategory: 'consumer' | 'employee' | 'minor' | 'dependent';

The grammar is not merely validating syntax here. It is enforcing a shared ontological commitment. Any agent that parses this grammar knows exactly what the term minor means in this system's universe of discourse — because the grammar defines it, not the agent's training distribution.

Structural dissonance occurs when agents agree on vocabulary but produce outputs that cannot be composed. Each message is internally valid; the interface between messages is not. This is the classical schema incompatibility problem, but in LLM multi-agent systems it takes a more insidious form: agents can generate output that appears to conform to an expected structure while violating it in ways that only manifest downstream.

The failure mode is particularly acute in agentic pipelines where the output of one agent becomes the input prompt context for another. If Agent A produces a structured analysis and Agent B expects that analysis in a different organization — different field ordering, different nesting conventions, different assumptions about what constitutes a "section" — then B will misparse A's output silently, constructing a corrupt internal representation that drives subsequent actions.

The mitigation here is schema pinning at the grammar level: the communication format is not a convention or a best-practice guideline but a formal production rule that both agents parse against. In my experimental multi-agent system, agent message formats are treated as first-class language constructs in the DSL, not as JSON schemas that live in documentation and erode over time.

Normative dissonance is the most consequential degree. It occurs when agents operate under divergent or unspecified models of what they are permitted to do, obligated to do, and forbidden from doing. An agent may produce output that is lexically grounded, structurally valid, and yet normatively inadmissible — because the system has no formally enforced permission architecture.

This is the degree that connects most directly to the emerging discourse on AI safety, but it is important to locate it precisely: normative dissonance is not primarily a values alignment problem at the level of human flourishing. It is a technical architecture problem at the level of inter-agent protocol. A system that allows agents to take actions without a formally specified deontic context is a system that will produce normative dissonance as a matter of course — regardless of how well-aligned the individual agents are at the level of their training.

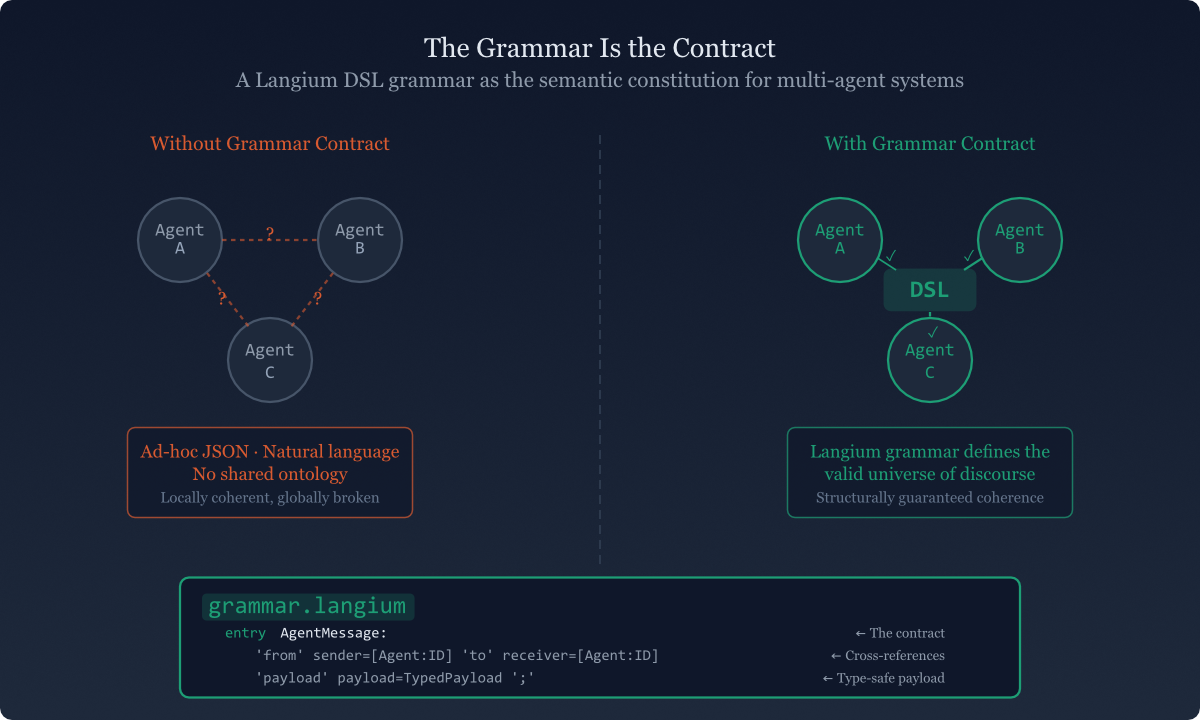

The analogy that keeps returning is constitutional law. A grammar does for an agent system what a constitution does for a polity: it defines not just the rules of procedure but the valid universe of discourse. It specifies what can be said, what can be done, and what relationships among actors are legally coherent.

In Langium, a grammar is an executable specification. It is not documentation. It is not a schema that agents are expected to follow voluntarily. It is a formal artifact that either accepts or rejects any candidate communication, with deterministic parse results that every participant in the system can rely on. This is the crucial distinction between convention-based coordination and grammar-based coordination.

A Langium grammar is not a description of how agents should communicate. It is a formal constraint on what they are capable of communicating. The grammar doesn't guide agent behavior — it defines the boundary conditions within which behavior is possible. Everything outside those boundaries is not merely discouraged; it is, by construction, inexpressible in the system's language.

This has profound implications for how we think about agent composition. When two agents that both operate under the same Langium grammar interact, lexical dissonance is structurally precluded — the grammar enforces a shared ontology. Structural dissonance is architecturally prevented — the parse tree is the communication, and it is unambiguous. What remains is normative dissonance, which requires a separate layer: a deontic logic specification embedded in or alongside the grammar.

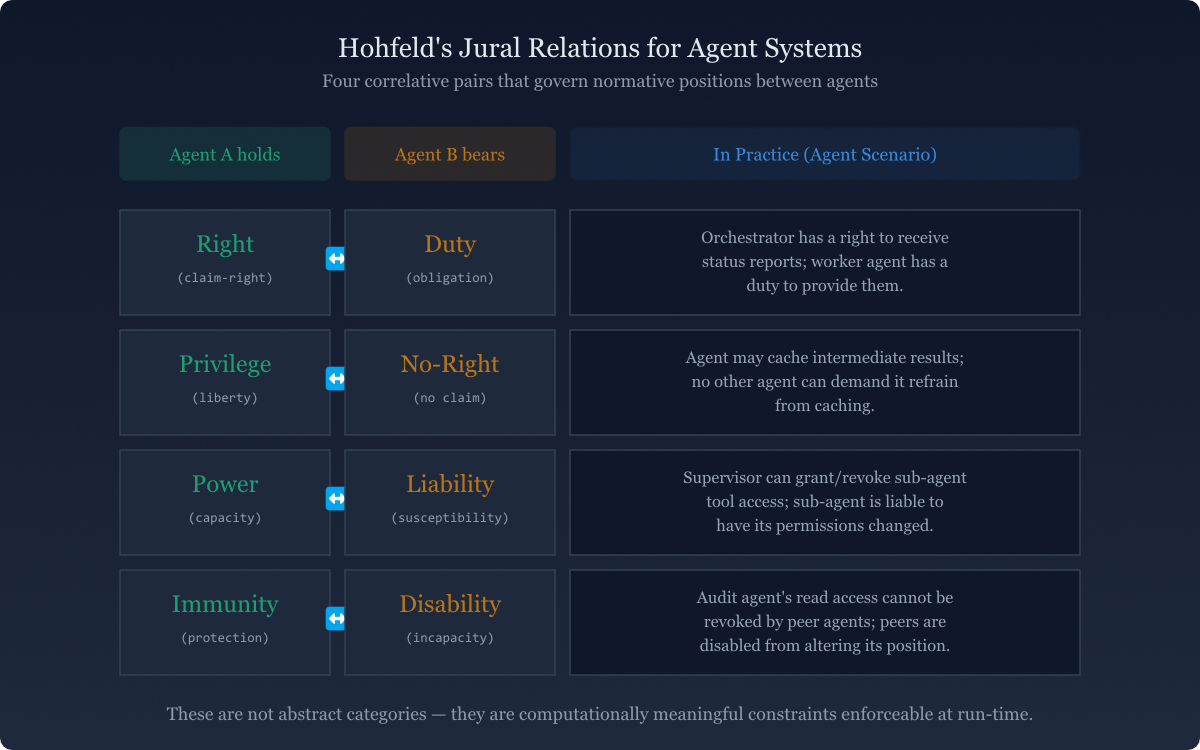

Classical deontic logic gives us three modal operators: obligation (O), permission (P), and prohibition (F). An agent is obligated to perform an action, permitted to perform it, or forbidden from performing it. This is a useful starting vocabulary but it is underspecified for multi-agent systems, because it doesn't account for the relational nature of permissions — the fact that a permission is always a permission with respect to some other party.

Wesley Newcomb Hohfeld's analysis of legal relations, developed in 1913 and still the most rigorous framework in jurisprudence for reasoning about rights, offers a more powerful substrate. Hohfeld distinguished eight fundamental legal relations organized in two correlation tables:

| Jural Position | What it means for the holder | Agent system analog |

|---|---|---|

| Right | Can demand action from another | Agent A can require Agent B to respond |

| Privilege | No duty to refrain from action | Agent A may invoke a capability without obligation to justify |

| Power | Can alter jural relations of another | Orchestrator can expand or restrict Agent B's permission set |

| Immunity | Cannot have jural position altered by another | Agent A's core constraints cannot be overridden by peer agents |

| Duty | Must act for the benefit of another's right | Agent B must return structured output when Agent A holds a right to it |

| No-right | Cannot demand action from another | Agent A cannot compel Agent B outside its defined interface |

| Liability | Power-holder can alter one's jural position | Agent B's capabilities can be dynamically modified by authorized orchestrator |

| Disability | Cannot alter another's jural position | Peer agents cannot grant each other elevated permissions |

Applying Hohfeld's framework to multi-agent systems gives us a four-layer permission stack that maps directly onto architectural decisions in DSL design.

The grammar defines which agents hold rights to demand responses from which other agents, and what duties correspond. In Langium terms, this is the interface contract: when Agent A invokes a defined production rule, it is exercising a right; Agent B parsing that production has a correlative duty to produce a conformant response. The grammar enforces this pairing structurally.

Privileges define what agents may do without requiring justification. An agent that processes consent records has a privilege to read those records but — crucially — no-right to read records in a different consent tier. This maps to the tiered data architecture in a multi-agent analytics schema: the grammar's type system encodes privilege scope, and crossing tier boundaries is not a policy violation but a parse failure.

Powers allow some agents to alter the jural positions of others — to expand or restrict permission sets dynamically. In a multi-agent system, this is orchestration authority. The liability layer specifies which agents can be reconfigured by which other agents. Without formal specification of powers and liabilities, you get the most dangerous form of normative dissonance: agents that grant each other permissions that neither individually possesses. The system escalates privilege through the combinatorial composition of locally-authorized agents.

Immunities define what cannot be altered regardless of orchestration authority. These are the constitutional provisions that no power can override. In a consent management system, an agent processing data for a minor holds an immunity: no peer agent and no dynamic orchestration instruction can alter the constraint that processes involving minor data require explicit guardian consent. This immunity must be encoded in the grammar — not as a runtime check, not as a prompt instruction, but as a structural constraint that makes non-compliant operations inexpressible.

// Hohfeldian immunity encoded as grammar constraint

DataProcessingActivity:

subject=DataSubject

purpose=ProcessingPurpose

consent=ConsentRecord;

// Grammar enforces: if subject.category == 'minor',

// consent MUST reference a GuardianConsentRecord.

// No agent instruction can bypass this — it's not runtime policy,

// it's structural grammar. A non-conformant activity simply doesn't parse.

ConsentRecord:

StandardConsentRecord | GuardianConsentRecord;

GuardianConsentRecord:

'guardian-consent' guardian=GuardianReference

'for' dependent=[DataSubject|ID];

Having mapped the taxonomy and the underlying logic, we can now describe what semantic dissonance looks like in practice and what mitigation patterns correspond to each degree.

Lexical dissonance signal: Agents in the same pipeline produce semantically inconsistent artifacts when queried about shared concepts. Ask the same semantic question to each agent and compare the response space — divergence indicates lexical drift.

Structural dissonance signal: Downstream agents produce hallucinated or padded content to fill gaps created by upstream output that didn't match expected structure. Padding and hallucination in structured output contexts is almost always a composition failure signal, not a model capability failure.

Normative dissonance signal: Actions that were not explicitly authorized appear in agent traces. Any action that no single agent was authorized to take but that emerged from the composition of agents is a normative dissonance event — and it deserves the same forensic attention as a security breach.

Ontology-grounded terminals: Replace all free-string terminals that carry semantic weight with enumerated or cross-referenced types. Every term that matters to the system's semantics must be declared, not implied.

Schema-pinned message formats: Agent communication formats are grammar productions, not JSON conventions. The exchange format is an artifact of the language definition and is as stable as the grammar version.

Deontic annotations in the grammar: Use Langium's validation framework to attach deontic constraints as semantic rules. A DataProcessingActivity involving a minor that references a StandardConsentRecord doesn't fail at runtime — it fails at validation time, before any agent ever processes it.

Immunity declarations as structural constraints: Hard constraints should not be runtime checks. They should be structural impossibilities. If an operation is forbidden under all circumstances, make it grammatically inexpressible rather than expressible-but-caught.

Power/liability scoping in orchestration grammars: The orchestration layer itself needs a grammar. Which agents can instruct which other agents, under what conditions, and with what authority scope — these should be productions in the orchestration DSL, not conventions encoded in system prompts.

I want to close with a reflection that connects this technical framework to a broader philosophical point, because I think the technical analysis points somewhere important.

In Vedic Mīmāṃsā philosophy (a favorite topic of mine), the concept of śābdabodha — verbal cognition — holds that meaning is not carried by words themselves but arises from the specific relational structure among words in a sentence. A word in isolation has no meaning; meaning is a function of the syntactic and semantic relations it enters into. This is not merely a philosophical position: it is a claim about the architecture of understanding.

What I am proposing with grammar-grounded multi-agent coordination is, in a sense, a computational instantiation of this insight. An agent operating without a shared semantic constitution is like a word without a sentence: it has distributional properties from training, statistical affinities, contextual associations — but not meaning in the sense that enables reliable coordination. Meaning, in a coordination-capable sense, requires a formal relational structure that all parties share.

The grammar is that structure. It is not a constraint on what agents can think. It is the precondition for what they can coherently communicate. And communication — not intelligence, not capability, not even alignment in the abstract — is what makes a collection of agents a system rather than a collection of capable individuals talking past each other.

"The grammar is not a constraint on what agents can think. It is the precondition for what they can coherently communicate."

This has a direct implication for how we build. If you want a multi-agent system that is coherent — not just most of the time but provably, architecturally coherent — then the grammar contract must be the first artifact you create, before agent prompts, before capability specifications, before orchestration logic. Everything else is built on top of the semantic constitution. Everything else is, without it, aspiration.

Semantic dissonance names a class of failure modes in multi-agent AI systems where shared communication channels mask divergent semantic contracts. The taxonomy distinguishes three degrees: lexical dissonance (shared terms, different referents), structural dissonance (valid outputs, incompatible schemas), and normative dissonance (locally authorized actions, globally impermissible outcomes).

Hohfeld's analysis of jural relations provides a principled foundation for specifying the permission architecture that normative dissonance requires. And Langium-based DSL grammars offer the only reliable mechanism for making these specifications operational — not as guidelines, but as structural constraints that define the boundaries of possible expression in a multi-agent system.

The grammar is the contract. Build it first.