Two Algorithms, Zero Shared Memory

When prior authorization meets retrospective denial, the real failure isn't procedural — it's semantic.

When prior authorization meets retrospective denial, the real failure isn't procedural — it's semantic.

On January 7, 2025, Dr. Elisabeth Potter was in the middle of a bilateral DIEP flap reconstruction when she had to scrub out of the operating room to take a phone call from UnitedHealthcare.

Potter scrubbed out because a UnitedHealthcare representative wanted to know — while the surgery was in progress — whether the patient's overnight hospital stay was justified.

The surgery had already been pre-authorized. The clinical necessity had already been evaluated and approved. The patient was, at that moment, demonstrably mid-procedure. The representative on the phone had no access to the patient's medical records. UHC denied the overnight stay anyway.

Potter posted a video about the experience. It got 5.5 million views. What happened next is documented in clinical, prosecutorial detail by Rachel Ankerholz in "Authorized, Operated, Denied: The Approval That Wasn't" — read it before you read the rest of this. The short version: Potter received a defamation threat from Clare Locke (the same firm UHC has retained against other public critics), was removed from UHC's in-network provider list, and is now carrying roughly $5 million in debt. She had a surgical practice. She told the truth about what happened to her patient. She is being made an example of, in the most direct economic terms available.

The standard response to all this is that it's a policy problem. Better regulation. Stronger appeals. Tighter oversight of AI in claims adjudication. Fine — but that argument has been running for years and the situation has not improved. The reason it has not improved is that the policy frame is treating the symptom. The disease is architectural.

What I want to argue here is that this class of harm is not just unethical — it is structurally predictable, the inevitable output of an architecture that was never designed to be coherent in the first place. And that a properly designed semantic AI architecture would make it impossible by construction. Not harder. Not better-monitored. Impossible to represent.

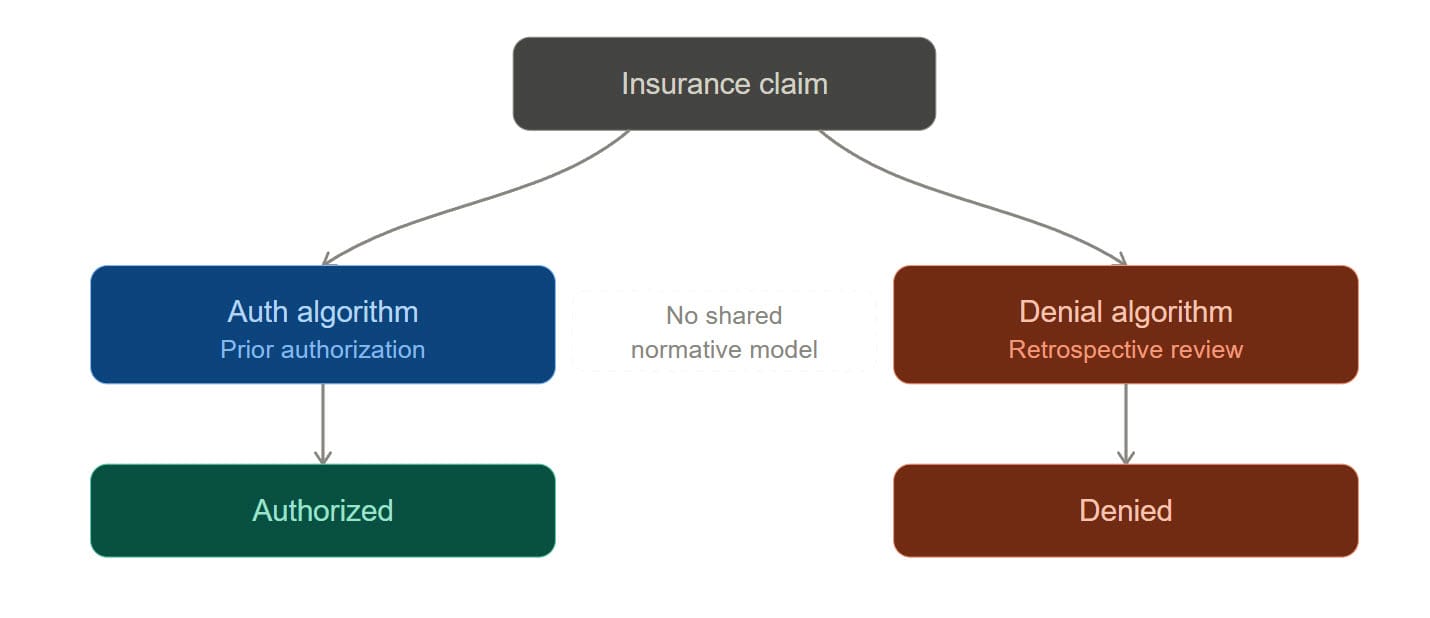

Ankerholz names the core problem cleanly: two AI systems are operating on the same claim, and they have nothing in common.

The first algorithm approves. Cigna's PXDX system allegedly processed 300,000 denials in two months with an average human review time of 1.2 seconds per case — meaning the human wasn't reviewing anything, just ratifying what the model had already decided. The prior-auth algorithm runs against one set of criteria, under one set of incentive pressures, producing a structured output: authorized.

The second algorithm denies. Post-service claims get screened by a different system, often a different vendor entirely — Cotiviti, Optum, Zelis, MultiPlan, EviCore. These vendors market their tools in terms of "payment accuracy" and "clinical chart validation." The plain-language version of the value proposition is: more denials sustained on appeal. One Cotiviti case study brags that a Blue Plan achieved "triple its original projected findings" after adopting their AI-powered review. Triple. That is not a quality metric. That is a revenue metric wearing a stethoscope.

Here is the architectural fact that should disturb every engineer reading this: those two algorithms do not share a normative model. They have no common ontology for what "authorized" means. They have no shared representation of the commitments the first decision created. They operate on the same claim number, but they are not, in any meaningful sense, reasoning about the same thing.

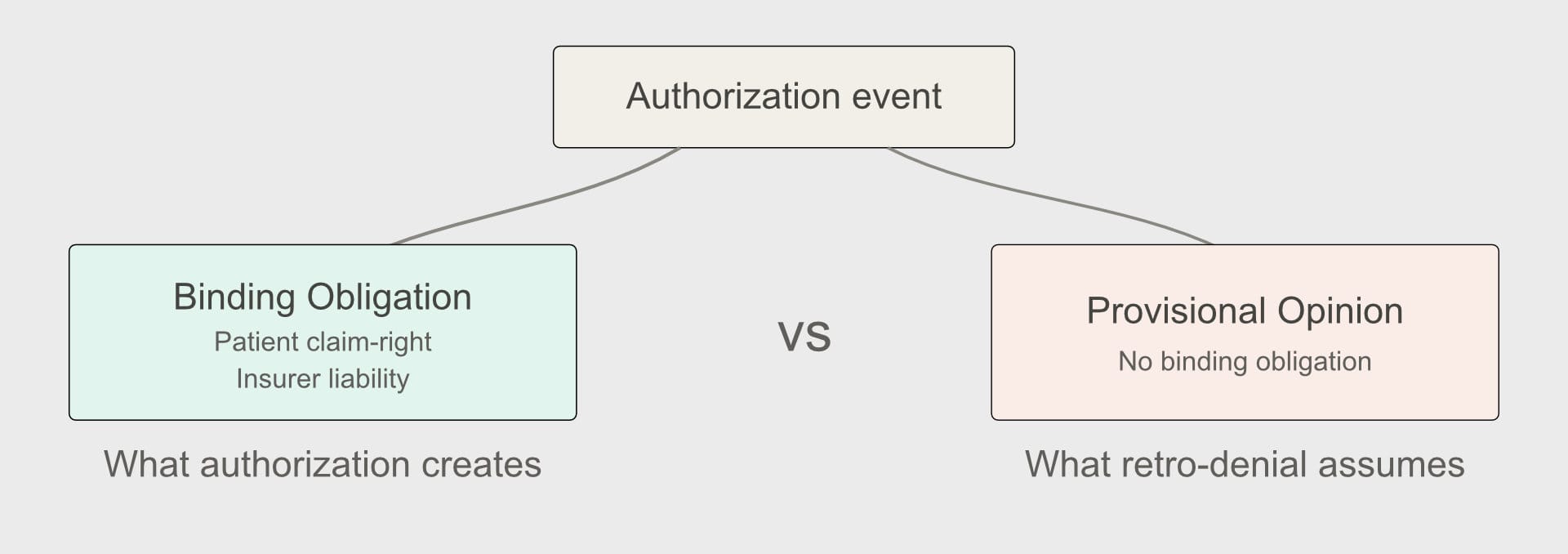

Let me be precise about the normative structure here, because the precision matters.

Wesley Hohfeld, a jurist writing in 1913, gave us the most rigorous vocabulary we have for legal relations. In his framework, legal positions aren't monolithic — "rights" aren't a single thing. They decompose into claims, privileges, powers, and immunities, each with a correlative on the other side of the relation.

Apply that framework to prior authorization and the structure becomes immediately clear.

When an insurer issues a prior authorization, it is exercising a power — it is changing the normative landscape. That exercise creates, on the insurer's side, a liability: an obligation to pay for the authorized service when performed according to the authorization's terms. On the patient and provider side, it creates a claim-right: a right to receive payment correlative to the insurer's duty to pay.

This is not a controversial reading. It is what authorization means. A prior authorization that creates no binding obligation is not an authorization — it is a provisional opinion, and calling it an authorization is a category error with serious downstream consequences. Consequences like a surgeon scrubbing out of an active reconstruction to argue about a hospital stay.

Now look at retrospective denial through that lens. The retrospective denial system treats authorization as if it were merely a privilege — permission that can be revoked without creating the kind of normative residue a true claim-right would. The insurer is simultaneously asserting that (a) authorization created reliance-worthy rights sufficient to justify a surgical team opening a patient, and (b) those rights can be extinguished by the very evidence that they were relied upon — i.e., the procedure actually happened.

That's not just unfair. It's normatively incoherent. In Hohfeldian terms, you cannot hold both positions at once. The normative state that existed before Potter made her first incision — authorized — either created binding obligations or it didn't. If it did, the subsequent denial is not a "retrospective review." It is a breach. If it didn't, then "prior authorization" is a fraud perpetrated on patients and providers, because the label implies normative content it was never designed to deliver.

Ankerholz raises the training data question and correctly identifies that these models are trained on historical denials — meaning they're trained on the outputs of reviewers whose incentives were never aligned with the patient. A model trained on cost-pressured human decisions learns to reproduce cost-pressured human decisions. The bias gets encoded, then laundered through algorithmic objectivity, then sold back to the industry that generated it.

This is accurate. But I want to name the deeper problem: these models have no semantic grounding in the normative domain they're operating in.

A model that predicts "what a cost-pressured reviewer would have denied" is not doing clinical review. It is doing pattern-matching on a proxy variable and reporting the result in clinical language. The clinical language is the problem. It creates the appearance of a normative judgment — this procedure was not medically necessary — while the underlying computation has no access to the normative concepts that phrase implies.

The detail Ankerholz flags as the most damning: UnitedHealth allegedly explored using AI to predict which denials were likely to be appealed, and which appeals were likely to be overturned — and to deny accordingly. Sit with that. That is not a model predicting medical necessity. That is a model predicting who will fight back. The clinical language on the denial letter is purely decorative at that point. It exists to satisfy a documentation requirement, not to describe what the model actually computed.

A proper semantic architecture would reject this. Not because someone wrote a rule against it, but because a well-formed normative ontology for claims adjudication cannot represent "probability of appeal" as a valid input to a medical necessity determination. The concept is outside the grammar. You cannot express that computation in the policy language without a type error.

This is what a semantic constitution does: it doesn't just guide behavior, it constrains the representational space so that certain computations become structurally inexpressible.

Let me describe what this looks like concretely.

Authorization events should write to an immutable normative ledger — an append-only record of what normative states have been created, when, on what clinical basis, and under what terms. Not a document repository. Not a claim history. A machine-readable normative state machine where each event either creates, modifies, or extinguishes a specific Hohfeldian relation.

This ledger is event-sourced and hash-chained — the same pattern I use in consent provenance systems — which means every state transition is attributable, auditable, and irreversible in the cryptographic sense. You cannot retroactively rewrite what the authorization event created, because the ledger does not permit that operation. The authorization didn't just record a decision. It wrote a normative state that subsequent agents must treat as a constitutional constraint.

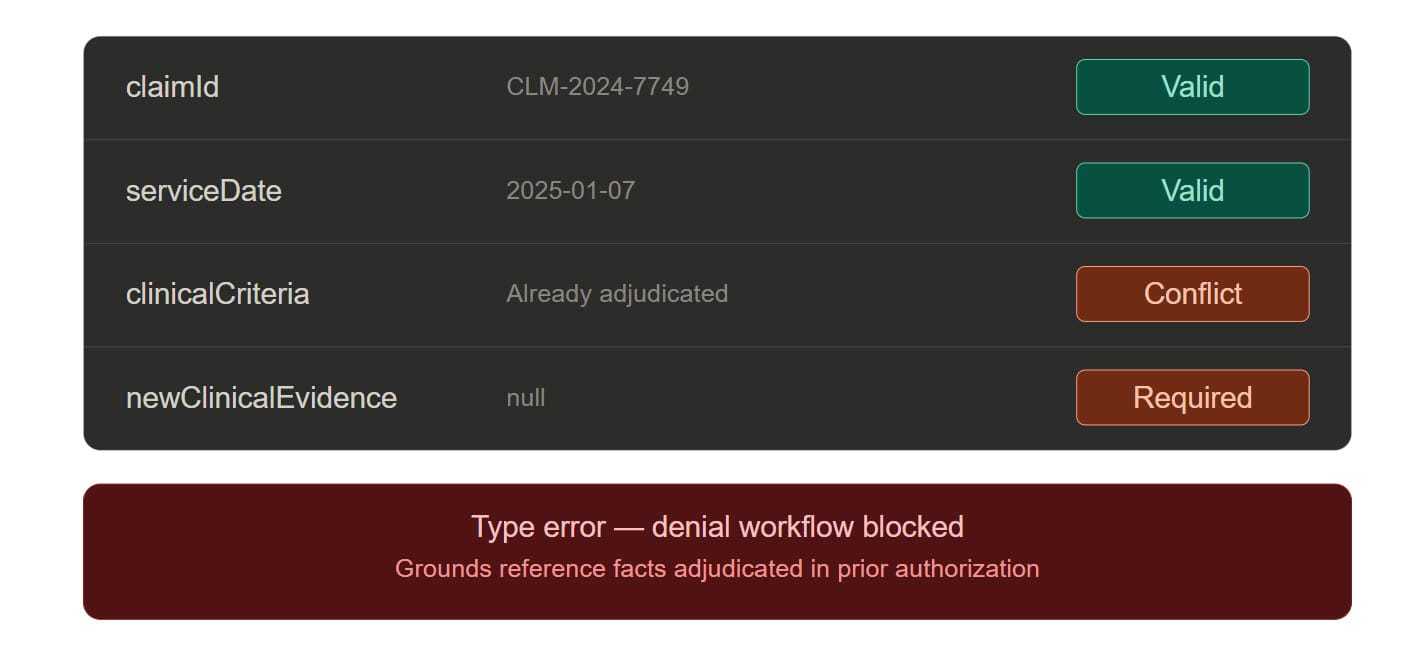

The semantic constitution for a claims adjudication system should be expressed as a domain-specific language — a PolicyAuthorization DSL — that makes the normative structure explicit and machine-verifiable.

In concrete terms, this DSL would:

The last point is the load-bearing one. Ankerholz notes that in many retrospective denials, the insurer is re-reviewing medical necessity "using clinical information it already had, adding only the evidence that its approval was acted on." In a DSL-governed system, that computation cannot be initiated. The type system rejects it. The retrospective review agent's input schema requires a newClinicalEvidence field that must be non-empty and must not overlap with the evidence already present in the authorization record. If you can't populate that field, the denial workflow cannot start.

Apply this to Potter's case directly. UHC's denial agent calls into the ledger and receives the authorization record. The record includes the clinical criteria evaluated, the outcome (authorized for procedure plus inpatient recovery), and the timestamp. The denial agent attempts to issue a denial on the overnight stay. The DSL asks: is there new clinical evidence not present in the authorization record that justifies this denial? No. The patient is, at the moment of the call, exactly as the authorization record described — anesthetized, opened, undergoing the authorized procedure. The denial does not type-check. It cannot be issued. The phone call doesn't happen.

The retrospective review agents — Cotiviti's model, Optum's model, anyone else's — operate inside a constitutional boundary defined by the DSL. They are not free to apply any criteria they choose. They can only evaluate facts that were not available at authorization time, and their outputs are validated against the normative ledger before a denial is issued.

If a review agent attempts to issue a denial that contradicts an authorization on grounds already adjudicated, the semantic validation layer rejects it — not as a policy matter requiring human review, but as a type error requiring the review agent to reformulate its conclusion or escalate to a genuinely new clinical determination.

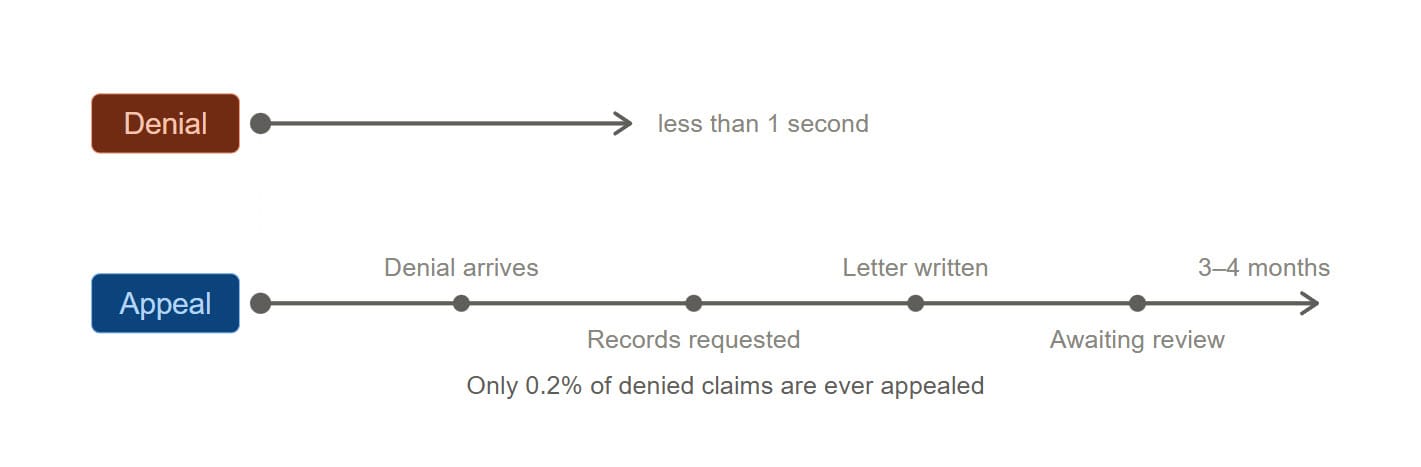

Ankerholz identifies the speed asymmetry as the mechanism by which the house always wins: denial runs at millisecond speed, appeal runs at human speed, and 99.8% of patients never appeal.

The standard response is: make appeals faster. That's a reasonable reform. But it does not address the underlying architecture.

The semantic fix is different: an agent holding the immutable normative ledger can generate a machine-speed preliminary reversal signal the moment a retrospective denial contradicts an existing authorization. Not a human-initiated appeal. An automated normative consistency check that fires the instant a denial event is proposed that conflicts with a prior commitment.

In practice: before a denial letter is generated, the system checks whether the denial can be coherently expressed given the existing normative state. If it cannot — because it contradicts an authorization on already-adjudicated grounds — it doesn't get mailed. The provider doesn't have to appeal. The patient doesn't have to fight. The incoherent state simply doesn't get written.

The 0.2% appeal rate isn't apathy. It's friction. Patients don't appeal because the process is engineered to exhaust them. Remove the structural source of the friction — the ability to issue semantically incoherent denials in the first place — and you don't need an appeals process for this class of error. The error doesn't occur.

Nobody in insurance.

The vendors operating in this space — Cotiviti, Optum, Zelis, MultiPlan, EviCore — are building faster denial engines. More sophisticated pattern-matching on richer claims data. Better prediction of which appeals will be filed. None of them are building a normative semantic layer, because a normative semantic layer would constrain what their models can output, and their customers are buying outputs, not constraints.

The regulatory environment is beginning to apply external pressure. The Senate Permanent Subcommittee on Investigations has criticized UHC, Humana, and CVS for using AI automation to deny Medicare Advantage post-acute care. But that scrutiny has focused on front-end denials. The back-end version — retrospective denial running through licensed vendor AI on pre-authorized claims — has not received the same attention. Regulation, as always, moves at human speed.

The architectural solution doesn't require waiting for regulation. It requires healthcare IT architects and health plan CTOs to recognize that they have built two systems normatively incoherent with each other, and that the incoherence is not a feature — it's a liability hiding in plain sight.

The class action exposure on nH Predict, with an alleged 90% reversal rate on appeal, is only the beginning. When the next wave of litigation starts naming the semantic incoherence between authorization and denial systems as evidence of willful design — and it will — the organizations that built a coherent normative architecture will have a defensible position. The ones that didn't will be explaining to a jury why their prior-authorization system and their retrospective-denial system didn't share a definition of "authorized."

Ankerholz ends her piece with a sentence that should be tattooed on the wall of every healthcare AI vendor's architecture review board: "A 90% error rate is only broken if the errors cost the company something."

My answer to that is architectural, not ethical. Don't make the errors cost more. Make them structurally impossible.

Not through policy guidelines that get ignored under cost pressure. Not through "human in the loop" requirements satisfied by a 1.2-second click. Through a domain-specific language where an authorization that has been relied upon cannot be contradicted on already-adjudicated grounds — because the grammar will not permit you to write that state transition.

The grammar is the contract. If you cannot express a normatively incoherent denial in the policy language, you cannot issue one. Not because someone reviewed it and caught it. Because the system cannot generate it.

Dr. Potter left a patient on the table to answer a phone call from a denial algorithm that had no record of what the authorization algorithm had already decided. She knew what was at stake if she didn't pick up, because she understood the system she was operating inside of. She should not have to understand that system. Her patient should not have been its collateral.

Two algorithms adjudicating the same claim need more than a shared claim number. They need a shared semantic constitution — a normative ledger neither can ignore, a grammar in which a denial that contradicts a prior authorization on already-adjudicated grounds simply cannot be written. Build that, and Potter's phone never rings.

That is not a utopian aspiration. It is a well-understood architectural pattern applied to a domain that has never demanded rigor from its AI systems.

It's time to demand it.

This post responds to Rachel Ankerholz's "Authorized, Operated, Denied: The Approval That Wasn't". Read it first.